Factory Acceptance Testing (FAT) for Leak Detection Systems

What to Watch Out for

When investing in a leak detection system for production quality control, ensuring its performance before installation is essential. A robust Factory Acceptance Test (FAT) verifies that the system meets all functional, accuracy, and compliance requirements as defined in your specifications. This article highlights the most critical aspects to evaluate during an FAT to guarantee reliable leak detection from day one.

Why Factory Acceptance Testing Is Critical for Leak Detection Systems

A comprehensive FAT confirms that the leak detection system performs under realistic conditions, prevents unexpected failures after installation, and ensures compliance with international standards. For industries where leak rates directly affect safety, product lifetime, or regulatory compliance, the importance of this step cannot be overstated.

1. How to Define Effective FAT Acceptance Criteria for Leak Detection Systems

Set Clear Performance Metrics

To ensure alignment between supplier and end user, the FAT must include:

- Defined accuracy and precision requirements

- Measurement uncertainty limits

- Compliance with industry‑specific standards

- Accepted environmental operating ranges

Establish Calibration and Traceability Requirements

Calibration expectations should include:

- Valid calibration certificates

- Traceability to national or international standards

- Defined recalibration intervals

Clear criteria eliminate ambiguity and support a smooth approval process.

2. Developing a Realistic FAT Test Plan for Leak Test Systems

Simulate Actual Production Conditions

Your test plan should mirror real operating parameters:

- Matching measurement times

- Identical tracer gas concentrations

- Equivalent flow paths and cycle times

Include Functional and Performance Test Scenarios

A comprehensive test plan must cover:

- Accuracy and repeatability

- System sensitivity

- Response time under realistic conditions

- Documentation and safety validation

This ensures that the FAT produces meaningful, production‑relevant results.

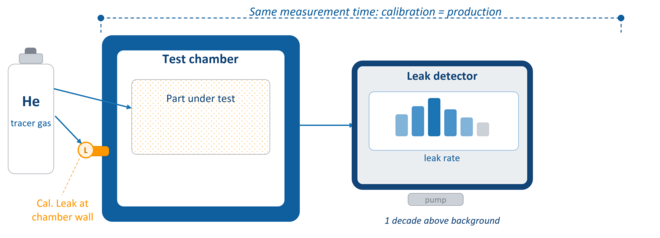

3. Ensuring Proper Calibration During FAT of Leak Detectors

Use the Same Measurement Time for Calibration and Production

Short measurement time is essential in automated leak testing.

Different measurement times shift calibration factors and can lead to:

- Overestimated sensitivity

- Incorrect leak rate readings

- Missed production leaks

Calibrate Using the Same Tracer Gas Concentration

If calibration uses 100% helium but production uses diluted helium, small leaks may go undetected.

Correction factors are required to compensate for concentration differences.

Install Calibration Leak Directly at the Test Chamber

Leak location influences response time and measured leak rate.

A leak placed close to the detector will appear larger than a leak placed inside the chamber—leading to false assumptions about system sensitivity.

Choose Calibration Leaks One Decade Above Background

This improves:

- Signal stability

- Measurement accuracy

- Reduced background fluctuation impact

Essential for small‑leak sensitivity verification.

4. Documentation and Traceability Requirements in Leak Detection FAT

Ensure that:

- Every leak standard includes a valid certificate

- Uncertainty values and traceability paths are documented

- Calibration validity has not expired (typically 1 year)

Avoid relying on internal leaks, especially when high‑capacity pumps reduce the portion of tracer gas reaching the detector during normal operation.

5. Verifying the Smallest Detectable Leak Rate

Use a Verification Leak Matching the Reject Leak Rate

For accurate acceptance testing:

- Use the same tracer concentration, fill pressure, and measurement time as in production

- Position the verification leak at the most distant point from the detector

- Use customized screw‑in leaks for the exact reject limit

This confirms actual system sensitivity, not just calibration performance.

6. Ensure reliability of the results

a) Gauge R&R Analysis for Leak Detection Systems

Why Gauge R&R Matters

Gauge Repeatability & Reproducibility quantifies variation caused by:

- The instrument (repeatability)

- The operator (reproducibility)

Acceptable Gauge R&R Levels

- < 30% = acceptable

- < 10% = ideal for precision leak testing

If production parts vary too much naturally (e.g., filled battery cells), certified test leaks should be used in the R&R study.

b) Measurement System Capability: Cg and CgK for Leak Test Systems

Cg Index (Precision)

Cg ≥ 1.33 indicates strong repeatability.

CgK Index (Accuracy + Precision)

CgK ≥ 1.33 confirms that the system is both precise and unbiased—ideal for critical leak detection.

Capability indices validate whether the system is statistically stable and fit for production use.

Conclusion: A Strong FAT Ensures Reliable Leak Detection from Day One

A well‑executed Factory Acceptance Test protects your production quality, reduces risk, and ensures that the leak detection system performs with the required accuracy and repeatability. By focusing on calibration integrity, realistic test conditions, statistical capability, and traceable documentation, you can confidently approve your new system and start production with peace of mind.